Adobe Premiere Pro AI Feature Demo

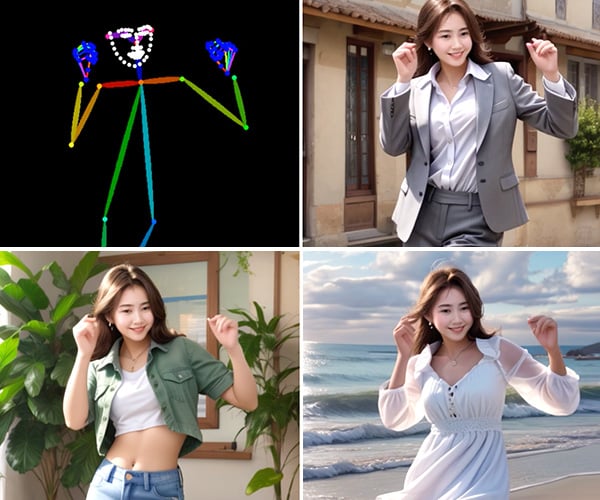

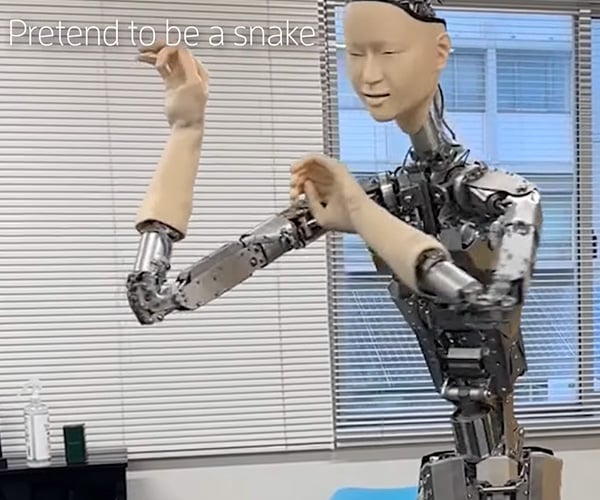

Generative AI can be used for good or evil. In the case of Adobe Premiere Pro, we’re excited about the potential of its AI tools to improve video editing workflows with the ability to add or remove objects with just a couple of clicks and extend scenes if they don’t fit your timeline. They also are testing integration with models like Sora AI to generate entire scenes.